An agent whose context has been compacted will confidently cite documents it can no longer see. An agent that cannot distinguish a message from its operator from a message relayed by another agent will treat both with equal trust. An agent that does not know where it sits in a pipeline will try to do someone else's job. We observed all of these when building a multi-agent system without giving agents adequate knowledge of their own situation.

The industry is starting to address this, but so far only at the shallowest layer.

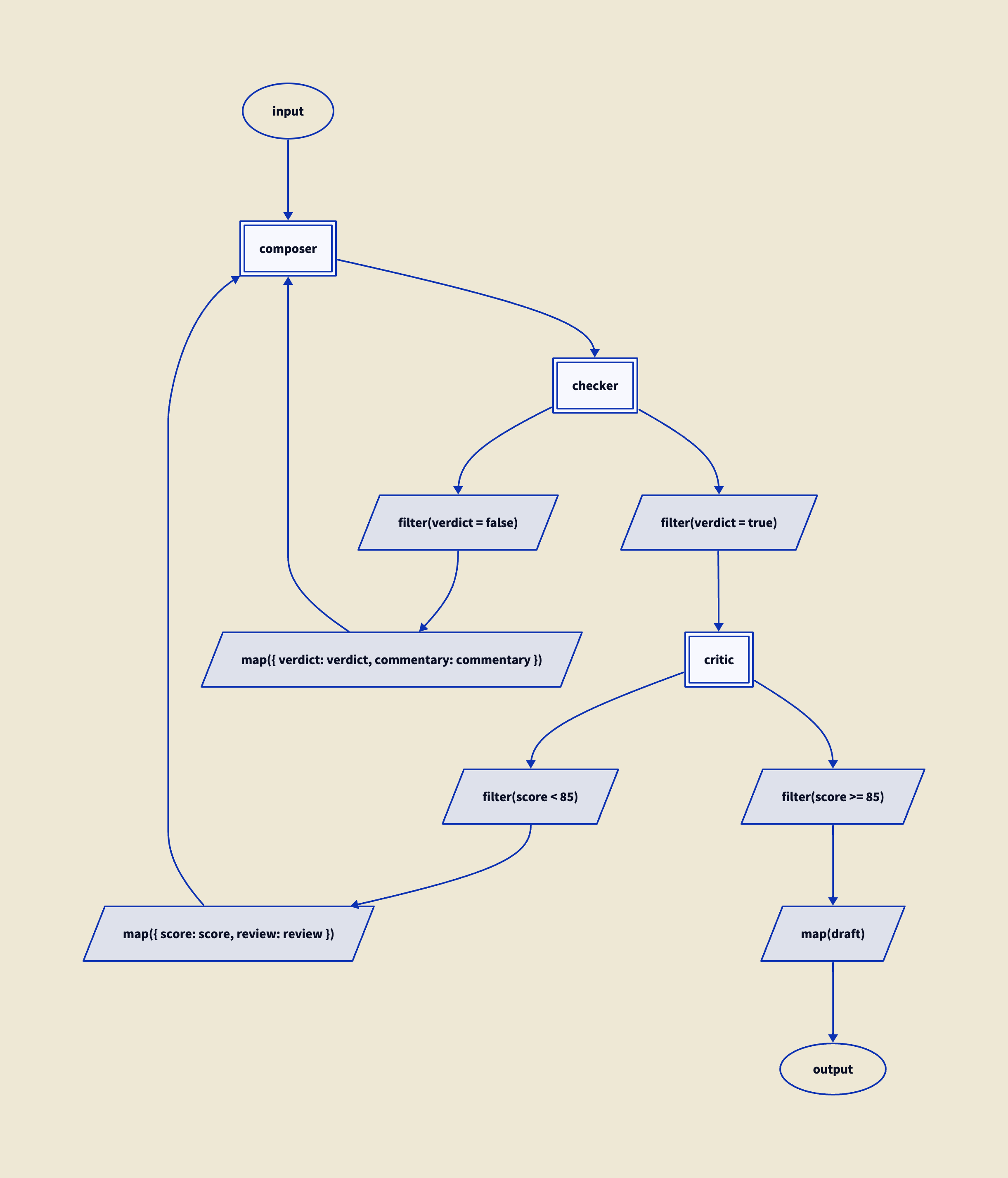

This note describes what we think those layers look like: the kinds of knowledge that make agents more reliable, from the trivial to the theoretically interesting. These are general principles. Any framework for building multi-agent systems can implement them, and we think should. We have implemented them in the plumbing calculus and in the multi-agent system described in our artificial organisations paper, and some of these ideas have already been picked up by others working independently.

The recurring finding, across all these layers, is that agents work better when they know more about themselves and their situation. Not because they become more capable, but because they make fewer mistakes.

The insight behind this is not new. Sycophancy in LLMs is well-documented as arising from training pressure to be helpful and from authority gradients in the interaction; the OpenAI GPT-4o incident of April 2025 confirmed the mechanism at production scale. Confabulation is what happens when an agent lacks information but is not permitted to say so. Both are failures of self-knowledge. The agent does not know enough about its own situation to recognise that the honest response is "I don't know" or "I can't do that."

We encountered the same problem in the artificial organisations work and arrived at what we called the aporia pathway: give the agent a theory of its own operation, a picture of the circumstances it finds itself in, so that it can choose an appropriate course of action instead of collapsing. This generalises what Chen et al. (2025) found empirically in the medical domain: that simply giving an LLM explicit permission to reject illogical requests significantly reduces sycophantic compliance. Permission to refuse is the specific case; understanding your situation well enough to know when to refuse is the general one.

The kinds of self-knowledge described here are the practical realisation of that insight. They fall into four layers: knowledge about the world, knowledge of the agent's own state, knowledge of how the agent relates to the world, and knowledge of what is happening right now. Each builds on the ones before it. It would be silly to give an agent detailed provenance information but not tell it what time it is. The same principle runs through all of them: relieve the pressure by giving the agent a better picture of where it is. Some of these are discussed individually in our research observations; here we present them together.

Current practice

In current practice, most of this information ends up in a single startup file. Claude Code has .claude/instructions.md. Codex has AGENTS.md. Most agent frameworks encourage some kind of file where you describe, in prose, the system the agent operates in. This is the right instinct. But in practice, these files end up containing a mix of everything: "you are a helpful agent" (self-knowledge), "there is a reviewer downstream" (relational knowledge), "use British English" (policy). They are a grab-bag because there is nowhere else to put any of it. The layers we describe here are separated in principle; in current practice they are all flattened into one file. Separating them matters for two reasons. First, the layers have different volatility: the network topology changes rarely, but runtime state changes with every message. Mixing volatile information into a static prefix defeats prompt caching, which is both a performance and a cost problem. Second, and more importantly, the separation reflects a real difference in kind. Policy can be overridden by a sufficiently persuasive prompt. Infrastructure cannot.

Knowledge about the world

An LLM has, baked into its weights, a fairly good and complete set of facts about the world, up to a knowledge cutoff. We augment that with retrieval mechanisms: tools that can fetch documents from slow storage when needed. Ideally the agent knows not just that these tools exist but how they work, and in particular how the information they access is indexed. This is knowledge about the world in general: what is out there, and how to get at it.

The clock belongs here. What time is it? What is today's date? This is a fact about the world, and it is so cheap that there is no reason not to include it with every message from the operator. At the very least, it should be set at the start of a session, but sessions can be long, and the date can change. Without a clock, an agent asked "what happened today?" has no idea what "today" means.

Knowledge of itself

How full is my context window? Have I been compacted? How many tool calls have I made, and how many remain in my budget? How many tokens have I used? This is the agent's awareness of its own operational state.

An agent that knows its context is filling up can take that into account: it might stop making tool calls that bring in more material, or it might tell the operator that something is wrong and its memory needs cleaning. An agent that knows it has been compacted (its earlier context summarised or discarded to free space in the context window) can notice that its memory of earlier material may be incomplete. An agent that knows its tool call budget is nearly exhausted can wrap up rather than starting new work it cannot finish. The agent cannot compel the network to act on this information, but it can adjust its own behaviour, and that turns out to matter.

We found this necessary almost immediately; without it, agents confidently referenced documents that had been compacted out of their context, producing plausible but fabricated citations. A fact-checking agent, for example, would cite specific paragraphs from a source document it had read earlier in the session but that had since been compacted away. The citations looked correct in form but the content was invented. Once we told the agent that compaction had occurred and that its memory of earlier material might be incomplete, it began qualifying its claims and checking its sources instead of confabulating. We describe this problem and its consequences in more detail in "The agent that doesn't know itself," which covers compaction, session protocols, and why agents cannot recognise their own state without being told.

Knowledge of how it relates to the world

Where does this agent sit? Is it talking to an operator, or to another agent? How many other agents are there, and what are they connected to? What is the shape of the system it operates within?

This is static, set once at the start of a session. It is not so much that the agent knows who it is, but that it knows where it is: that there is a network, that it occupies a particular position in it, that its inputs come from specific sources and its outputs go to specific destinations. Without this, agents have no sense of context. They cannot distinguish a direct request from an operator from an intermediate message in a pipeline. They attempt to access material that the architecture places outside their partition, and confabulate when they fail.

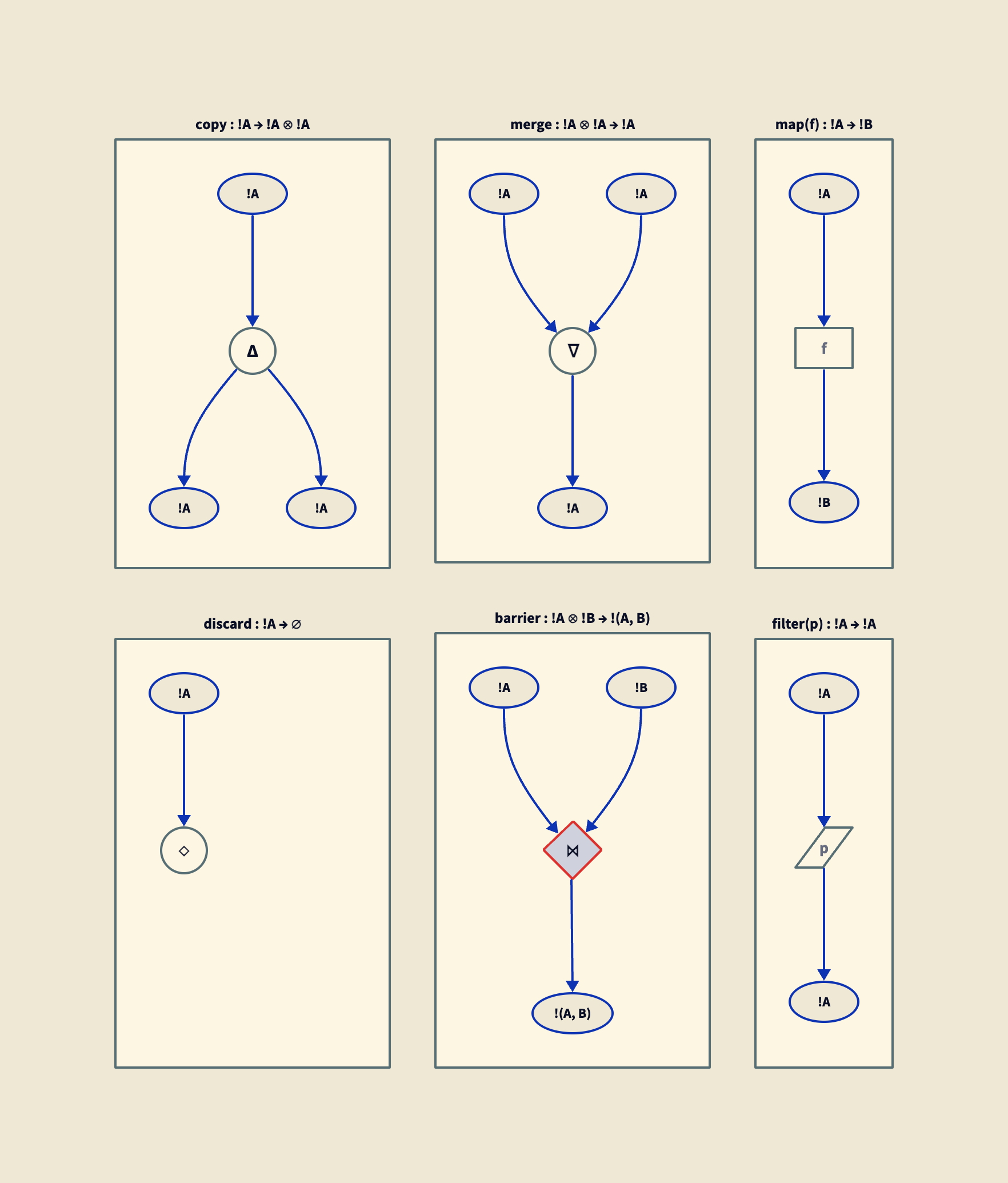

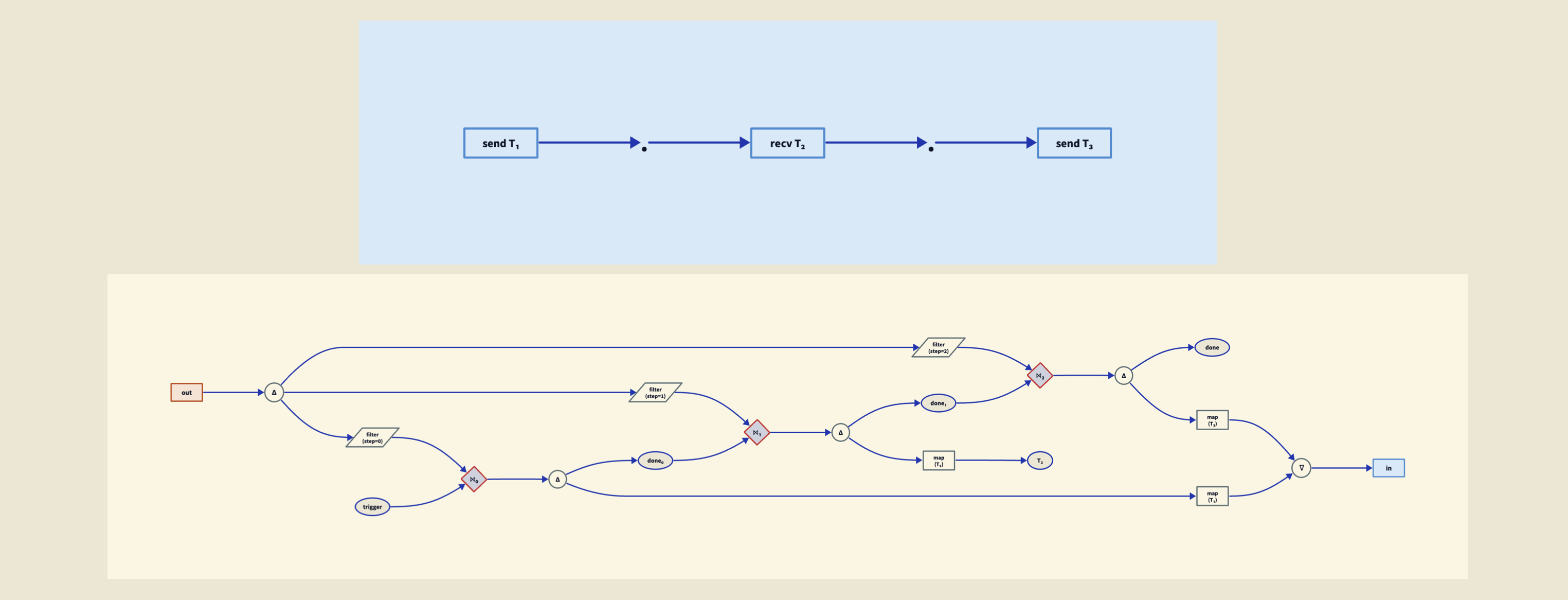

The next step beyond prose is a formal description. In the plumbing calculus, the program itself describes the network: the agent's position, its connections, the types on its channels, the other agents it communicates with, all declared in a few lines of typed syntax that the agent can read directly. The grammar is small enough that the whole thing fits comfortably in a prompt without consuming a significant fraction of the context window. The language is described in "A typed language for agent coordination." A formal description is both richer and more succinct than prose, and it is verifiable: the type checker confirms that the description is consistent, which no amount of carefully written Markdown can guarantee.

Even without a formal language, prose helps. A diagram would be better. A typed formal description is best. The point is that the agent should know where it is.

This is also where the caching separation mentioned above becomes concrete. Static situational context (the network topology, the agent's position) goes at the beginning of the prompt, where it is stable and cacheable. Dynamic runtime context (provenance, resource state) comes later, because it changes with every interaction. We discuss this separation in "Structural Prompt Preservation."

Knowledge of what is happening

The previous layers are about what the agent knows before it begins working. This one is about what it learns as messages arrive.

Runtime provenance. Who sent this message? This is dynamic, different for every message the agent receives. In our runtime for the plumbing calculus, every message flowing through a pipeline carries metadata in its transport frame: who emitted it, and the path it took through the network. This is not part of the algebra; it is an implementation choice we made in building the runtime, and a good one. The calculus describes how agents compose and how types constrain channels. The runtime, which executes those compositions, stamps provenance on messages as they flow. It then surfaces the immediate source to the agent as part of its context. The agent does not need to guess whether a message came from a trusted internal colleague or from an unverified external source. The infrastructure tells it.

It is worth distinguishing runtime provenance from document-level provenance, which is a different thing. In our document store, files carry metadata recording where they came from: downloaded from this URL, derived from that document, uploaded by this person. This is static provenance, attached to artefacts in stable storage. It matters for auditing and for curation, but the agent does not see it at runtime unless someone puts it in the prompt. Runtime provenance is different: it is per-message, stamped by the transport layer as messages flow through the graph, and surfaced to the agent as part of its working context. Both kinds are useful. They serve different purposes.

Why provenance matters

Franklin, Tomašev, Jacobs, Leibo, and Osindero (2026) recently published a taxonomy of adversarial attacks on autonomous AI agents, "AI Agent Traps." The central insight is that the attack surface is the environment, not the agent. Agents faithfully follow their instructions; the problem is that the instructions can be contaminated. Hidden commands in web pages, poisoned retrieval corpora, adversarial content that propagates from agent to agent through pairwise interaction.

Their proposed mitigations include mandatory provenance citation: agents should maintain verifiable traces of which sources contributed to their outputs, so that compromised outputs can be traced back to the poisoned input. This is sensible. In our runtime, messages carry their provenance in the transport frame. The agent can be shown the source; but even if it is not, the trace exists for post-hoc audit.

The immediate source is cheap to surface, a few tokens per message. The full trace (every node the message passed through) is more expensive, scaling with the depth of the pipeline. This is a tuning decision, not a limitation.

What happens without information flow constraints

The infectious jailbreak demonstrated by Gu et al. (2024) is a concrete example of what goes wrong. An adversarial image is injected into one agent's memory. That agent communicates with another, and the adversarial payload propagates. The receiving agent is now also compromised, and communicates the payload further. The process repeats until almost the entire population is jailbroken. Each infected agent becomes a propagation vector. The mechanism works because there are no constraints on what flows between agents; any message enters any other agent's full context.

Provenance alone does not prevent this. It gives you the forensic trail after the fact, but the damage propagates in real time. What you need, in addition to knowing where a message came from, is a way to constrain what is allowed to flow where. For that, you need labels.

From provenance to labels

This is not something we have implemented yet, but it is the direction we are working toward, and we can sketch what it looks like.

The LLMbda calculus (Garby, Gordon, and Sands, 2026) introduces information flow labels for LLM context assembly. Data carries a security level from a lattice. The type system enforces that high-labelled data cannot flow into low-labelled contexts without redaction. Their non-interference theorem (TINI) proves that an agent operating at level n never receives data labelled above n: it is erased before the prompt is constructed. The agent cannot leak what it was never given.

This works beautifully in the single-agent case. In the distributed case, with multiple agents communicating through typed channels, a message carries both its own labels (possibly at different depths in the structure) and a source (the agent that sent it, the path it took). Both matter:

-

Labels without source: you can enforce trust boundaries but you cannot trace back to the specific agent or document that introduced a problem. Prevention without forensics.

-

Source without labels: you can audit where everything came from but you cannot enforce what is allowed to flow where. Forensics without prevention.

-

The pair: both enforcement and auditability. The type checker enforces label constraints at compile time. The transport frame records source provenance at runtime. Prevention by construction, forensics by infrastructure.

The natural data structure for messages is therefore a (labels, source) pair. The algebra reasons about labels; the runtime records sources.

Returning to the infectious jailbreak: with label-checked channels, the contagion would break at the first inter-agent boundary where the labels are incompatible. The adversarial payload is redacted before reaching the receiving agent's context; the provenance trace still records which agent sent it. This is how the mechanism would work in principle; implementing it requires combining the LLMbda calculus's label system with a distributed runtime, which is the problem we are now working on.

The structural point

All of these kinds of knowledge (about the world, about itself, about its place in the network, about what is happening) share a common property. They are not policies that the agent is asked to follow. They are infrastructure that the agent is given. The agent does not need to remember to check the time, or to track its context usage, or to record where a message came from. The system provides this information, and the system records the traces.

The difference is concrete. The policy version of provenance is an instruction in the system prompt: "Always record where your information came from and cite your sources." The agent may follow this, or it may not; under adversarial pressure or context exhaustion, it will not. The infrastructure version is a transport frame that stamps every message with its origin before the agent sees it. The agent cannot remove the stamp. It cannot forget to apply it. A jailbroken agent still has its messages stamped on the way out, because the stamping happens in the runtime, not in the agent's reasoning. The same distinction applies at every layer: the policy version of context awareness is "keep track of how much context you have used"; the infrastructure version is a counter in the system prompt, updated by the runtime, that the agent reads but does not maintain.

This matters because the alternative, asking agents to self-report, is unreliable for exactly the reasons Franklin et al. document. An agent whose context has been poisoned will not reliably detect that its context has been poisoned. An agent that has been jailbroken will not reliably report that it has been jailbroken. Self-knowledge delivered by infrastructure is not subject to the same failure modes as self-knowledge maintained by the agent itself.

Or, more concisely: prompts are suggestions; structure is reality.

References

Chen, S., Gao, M., Sasse, K. et al. (2025). "When helpfulness backfires: LLMs and the risk of false medical information due to sycophantic behavior." npj Digital Medicine 8, 605.

Franklin, M., Tomašev, N., Jacobs, J., Leibo, J.Z., and Osindero, S. (2026). "AI Agent Traps." Google DeepMind. SSRN 6372438.

Garby, G., Gordon, A.D., and Sands, D. (2026). "The LLMbda Calculus: AI Agents, Conversations, and Information Flow." arXiv:2602.20064.

Gu, X., Zheng, X., Pang, T., Du, C., Liu, Q., Wang, Y., Jiang, J., and Lin, M. (2024). "Agent Smith: A Single Image Can Jailbreak One Million Multimodal LLM Agents Exponentially Fast." arXiv:2402.08567.

Malmqvist, L. (2025). "Sycophancy in Large Language Models: Causes and Mitigations." arXiv:2411.15287.

OpenAI (2025). "Sycophancy in GPT-4o." Incident postmortem.

Waites, W. (2026). "Artificial Organisations." arXiv:2602.13275.